Beyond the Words: How to Prevent Hidden Bias in AI Voice Interviews

Imagine your top candidate—brilliant, experienced, perfect for the role—is quietly filtered out of your hiring pipeline. Not because of their skills, but because they have a regional accent the screening AI misunderstood. This isn’t science fiction; it’s a real risk known as algorithmic bias, and it can silently undermine the fairness of your entire recruitment process.

When an AI interviews a candidate, its first job is to turn spoken words into text. But if the AI was primarily trained on one type of voice, it can struggle with diverse accents, dialects, or non-native speakers. This leads to transcription errors that create a ripple effect of bias, impacting everything from keyword analysis to sentiment scoring.

This graphic explains the three main bias types in AI voice transcription and their interactions.

This graphic explains the three main bias types in AI voice transcription and their interactions.

The Domino Effect: From Mistranscription to Misjudgment

An inaccurate transcript is more than just a typo; it’s a corrupted dataset. Let’s say a candidate says, “I led a grand project,” but the AI transcribes it as “I led a bland project.” This single error can trigger a cascade of flawed analysis:

- Keyword Extraction: The system fails to register a positive power word (“grand”).

- Sentiment Analysis: The model incorrectly flags the candidate’s tone as neutral or negative.

- Confidence Scoring: The AI might interpret the misheard word as a lack of clarity or conviction.

Suddenly, a strong, confident answer is logged as weak and unenthusiastic. When this happens repeatedly for certain demographic groups, it systematically disadvantages qualified people, directly contradicting diversity and inclusion goals. As legal and ethical scrutiny of AI in hiring grows, organizations are responsible for ensuring their tools are fair.

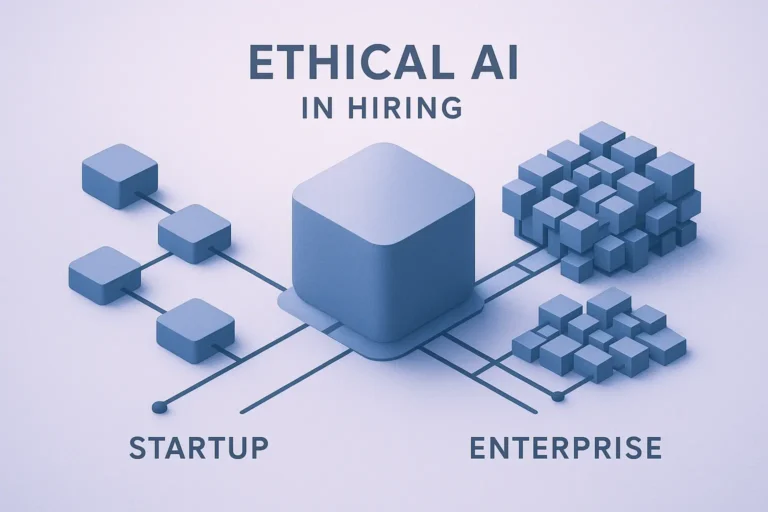

A Framework for Fairness: How to Mitigate Voice AI Bias

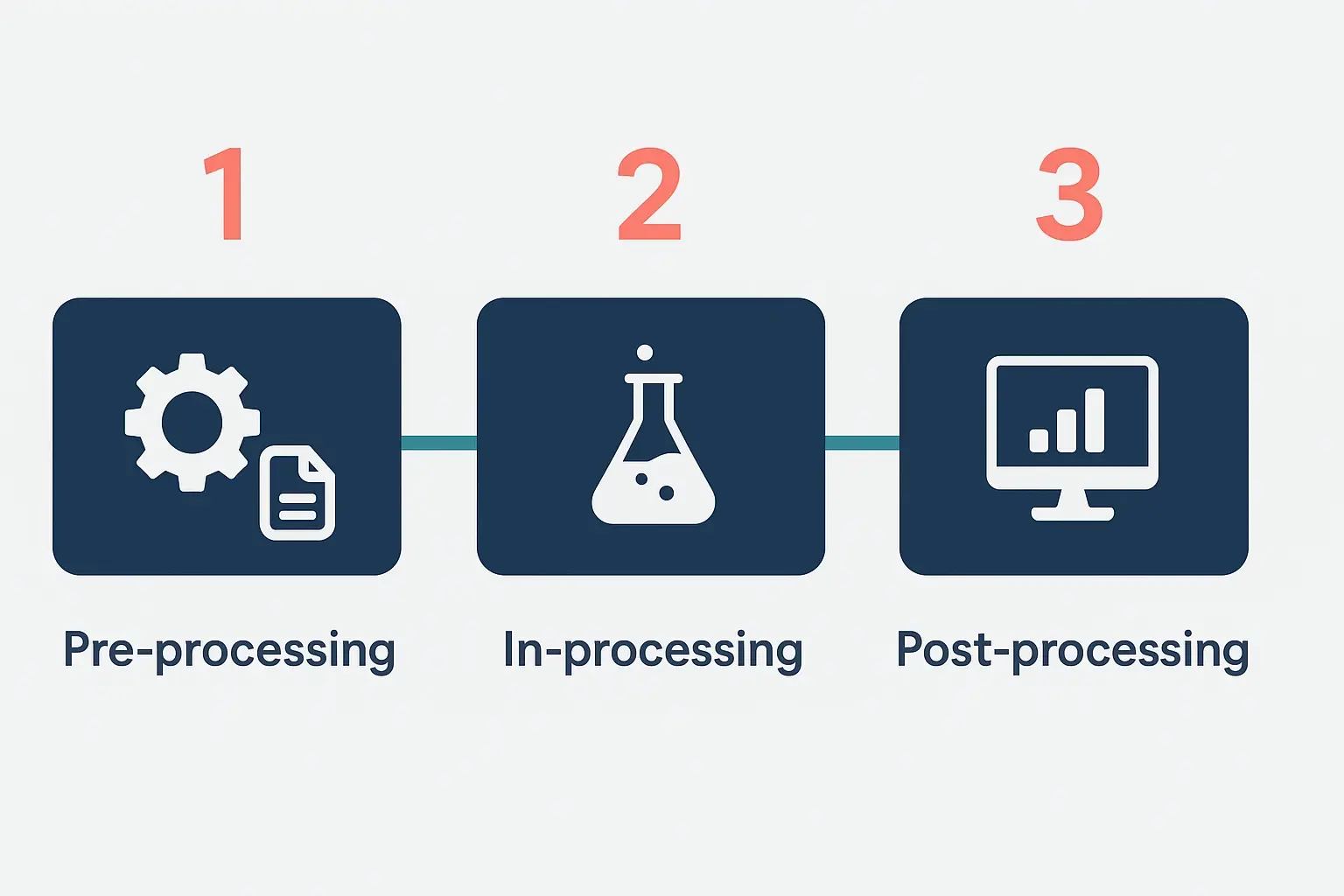

Preventing this bias requires a proactive, multi-stage approach. Experts often break it down into three key phases, ensuring fairness is built-in, not bolted on.

- Pre-processing (The Foundation): It all starts with the data. An AI is only as unbiased as the information it learns from. This stage involves intentionally training the system on vast, diverse audio datasets that include a wide range of accents, dialects, genders, and speech patterns.

- In-processing (The Build): During development, the algorithm itself can be designed to promote fairness. This involves using advanced techniques that actively penalize biased outcomes across different demographic subgroups, teaching the AI to prioritize equitable performance over simple overall accuracy.

- Post-processing (The Check): After the analysis is complete, a final check is crucial. This often involves a “human-in-the-loop” review, where people validate the AI’s conclusions, especially for edge cases. It also includes regular audits to test for and correct any emerging biases in the system’s analytical output.

Demonstrates bias mitigation steps from data prep to human-in-the-loop review.

Demonstrates bias mitigation steps from data prep to human-in-the-loop review.

The Best Defense: AI That Understands Like a Human

While mitigation frameworks are essential, the most effective solution is an AI that is fundamentally better at understanding human conversation. Instead of relying on rigid keyword matching, a truly human-like AI comprehends context, nuance, and intent—much like a person would.

This advanced approach is inherently more robust against bias. By focusing on the meaning behind the words, rather than just the words themselves, it can accurately evaluate a candidate’s response regardless of their accent or dialect. This ensures every candidate is assessed on the quality of their ideas, not the perfection of their speech.

Illustrates how Upfound AI’s human-like core supports fair voice processing.

Illustrates how Upfound AI’s human-like core supports fair voice processing.

Frequently Asked Questions

What is accent bias in AI?Accent bias occurs when an AI system, particularly in speech-to-text technology, demonstrates lower accuracy for speakers with non-dominant or regional accents because it was primarily trained on a limited set of voice data.

Can biased AI really lead to discrimination?Yes. If an AI tool consistently scores candidates from a specific national origin or region lower due to transcription errors, it can lead to discriminatory hiring patterns, which may have legal consequences under regulations like Title VII of the Civil Rights Act.

Isn’t high overall accuracy good enough?Not necessarily. A model can have 95% overall accuracy but still be highly inaccurate for a specific demographic subgroup. True fairness requires high accuracy across all groups, ensuring no single one is disadvantaged.

Your Next Step to Fairer Hiring

Understanding and addressing algorithmic bias isn’t just a technical challenge—it’s a commitment to building a more equitable and effective hiring process. By prioritizing fairness in the AI tools you choose, you ensure that you’re evaluating talent, not accents, and making hiring decisions that are truly based on merit.