Building an Internal HR Compliance Framework for Automated Recruitment

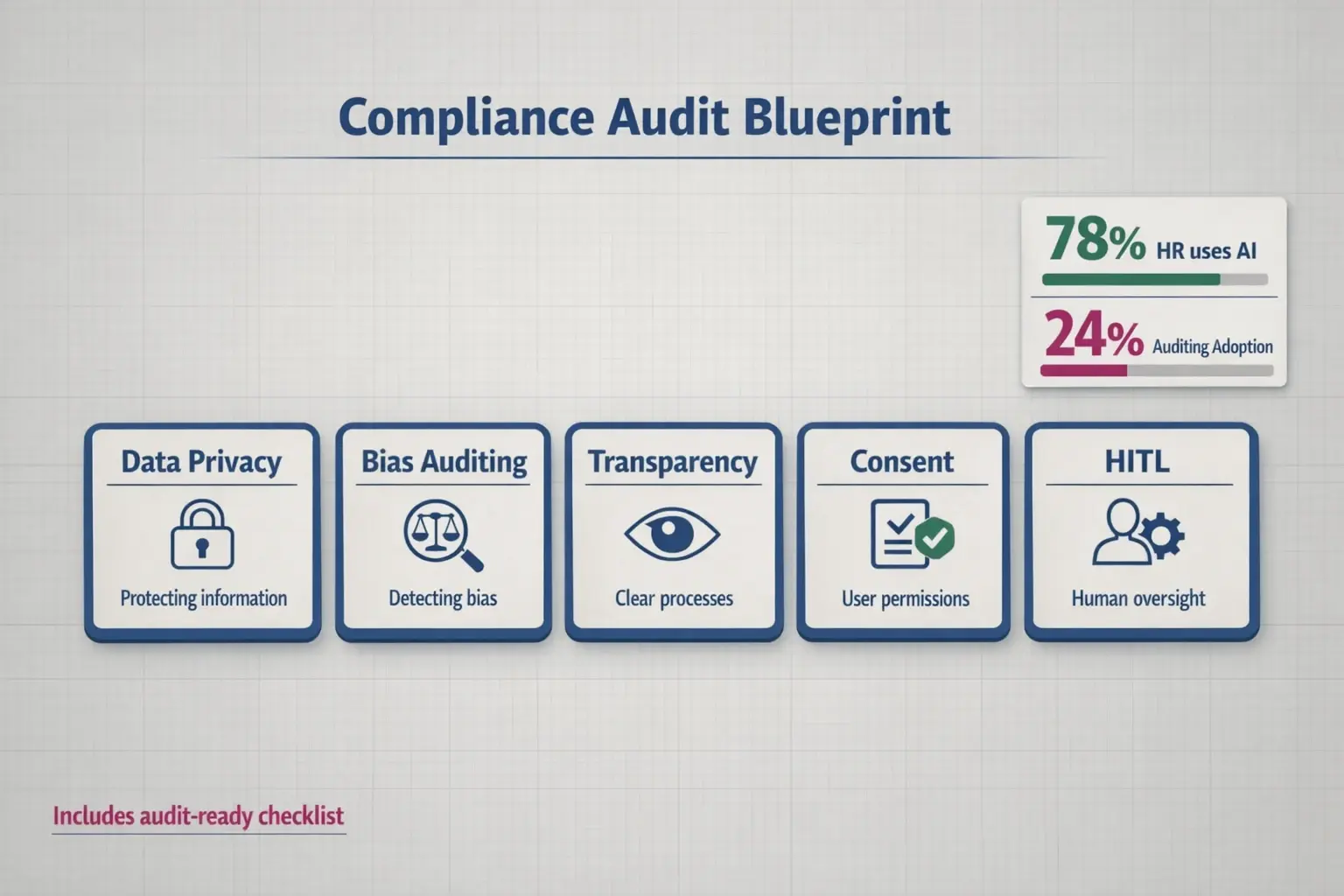

If you feel like the gap between adopting AI recruitment tools and actually governing them is widening, you aren’t alone. While 78% of HR managers now utilize AI for record-keeping and sourcing, only 24% have a formal bias auditing protocol in place.

That statistic represents a massive operational risk.

Most advice you’ll find today swings between two extremes: broad, theoretical educational guides or dense, terrifying legal jargon. Neither helps you configure your ATS tomorrow morning. To build a defensible strategy, you need an Operational Framework—a bridge between legislative requirements (like the EU AI Act and NYC Local Law 144) and technical execution within your HR ecosystem.

The Compliance Audit Blueprint

Compliance isn’t a one-time certificate; it is a continuous loop of assessment. Your framework must rest on five pillars: Data Privacy, Bias Auditing, Transparency, Consent, and Human-in-the-Loop (HITL) oversight.

The most critical technical hurdle here is the “Human-in-the-Loop” requirement. Under the EU AI Act, recruitment is classified as “High Risk.” This means purely automated rejections without human oversight can be legally precarious. Your internal policy must dictate exactly when a human recruiter reviews AI scores—for example, mandating a human review for any candidate scoring within 10% of the cutoff threshold.

Navigating the Regulatory Cross-Walk

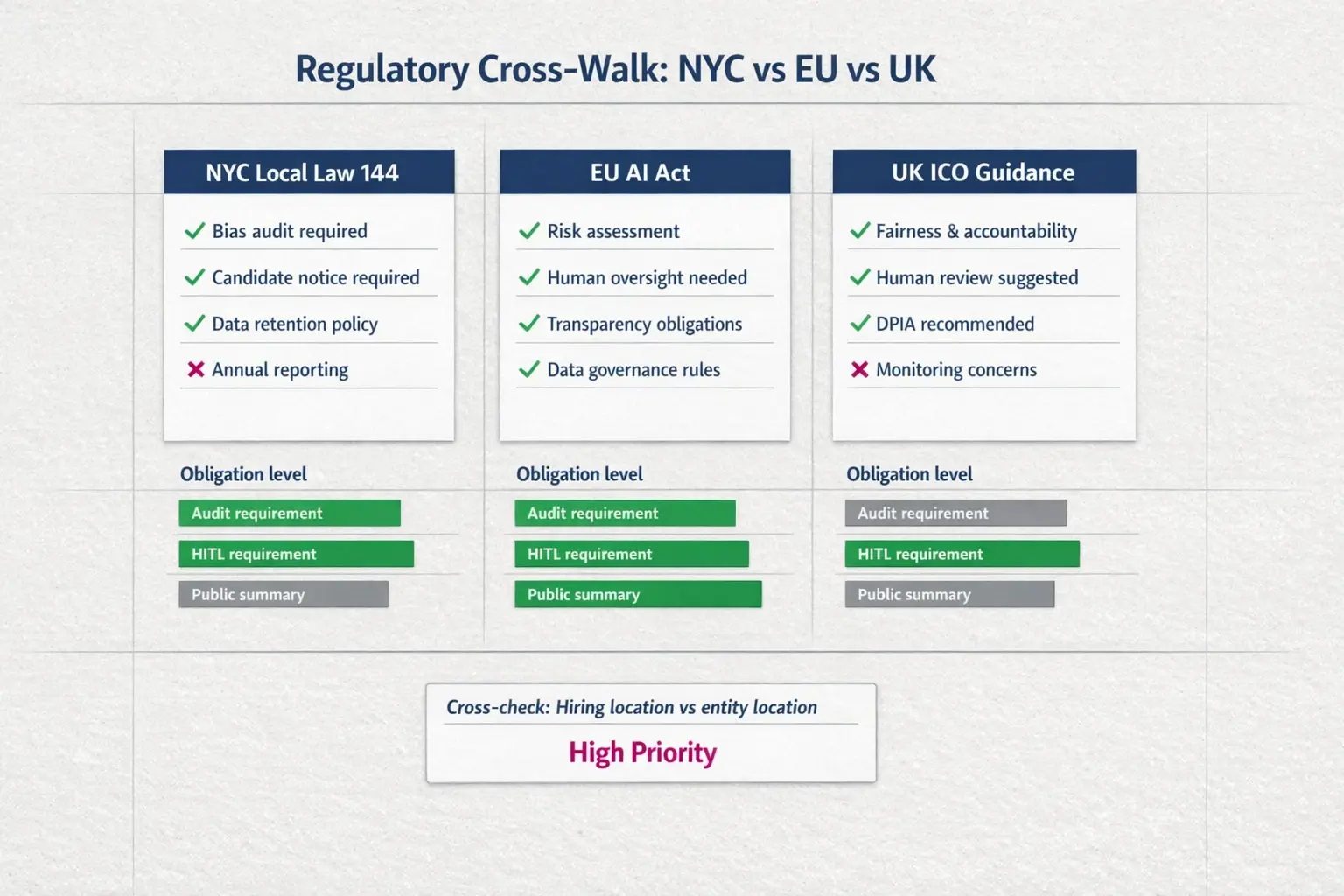

One of the biggest friction points for global hiring teams is jurisdictional overlap. Do you follow NYC rules if your headquarters are in London but you’re hiring remote workers in Manhattan?

Generally, the answer leans toward the strictest applicable standard. NYC Local Law 144 is unique because it mandates public transparency—you must publish a summary of your automated employment decision tools (AEDTs) audit results. In contrast, GDPR and the EU AI Act focus heavily on data minimization and the right to explanation.

The DPO’s Manual for Automated Hiring

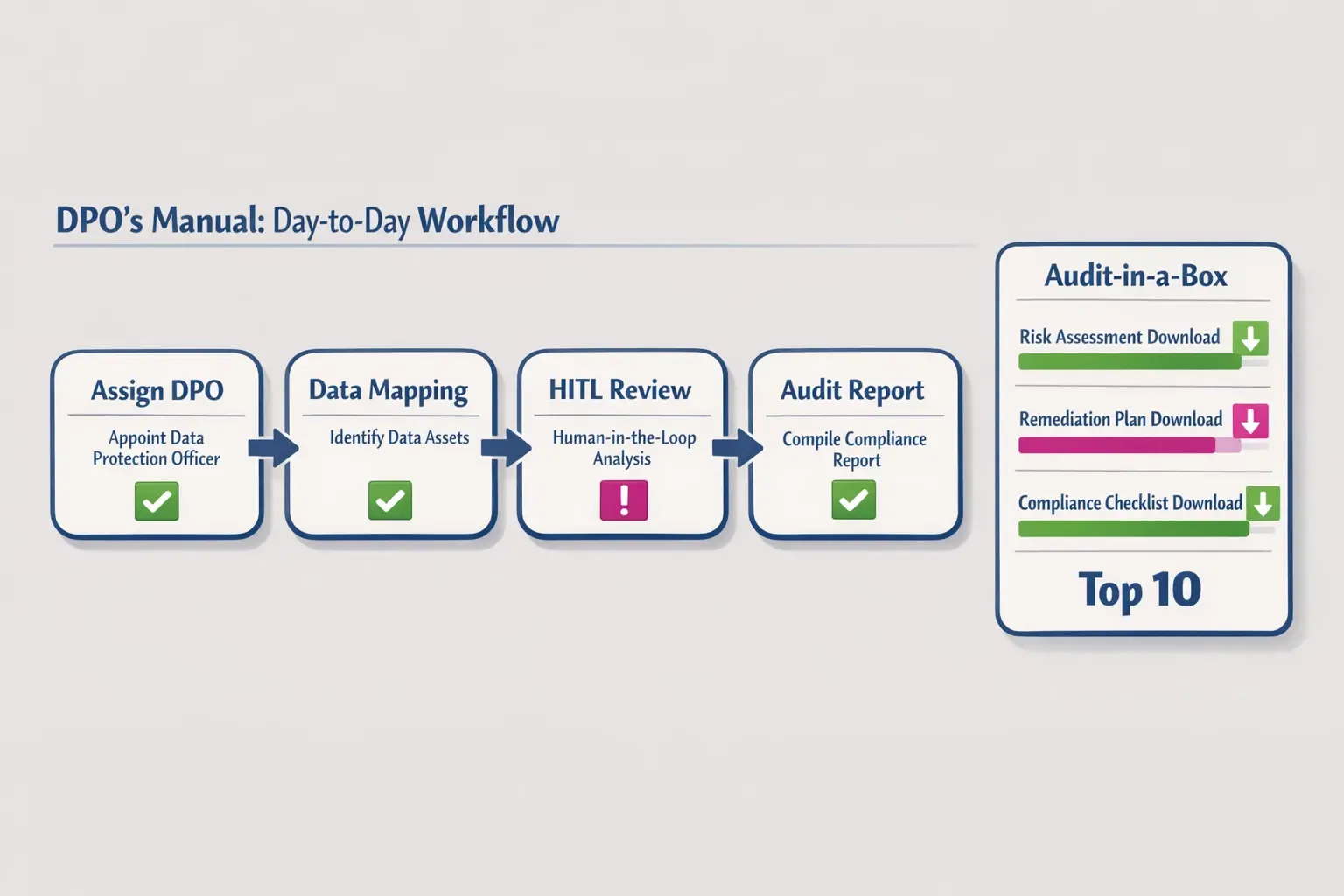

For your Data Protection Officer (DPO) or HR Operations lead, “compliance” translates to daily workflows. A robust framework requires a “Consent Management System” that triggers before a candidate enters the funnel.

This involves training recruiters to spot proxy bias. An algorithm might not exclude based on gender (illegal), but it might downgrade resumes containing the word “women’s college” or gaps in employment history (often a proxy for maternity leave). Your DPO’s manual should include specific scripts for explaining AI decisions to candidates who exercise their right to ask, “Why was I rejected?”

Technical Integration: Beyond Policy Documents

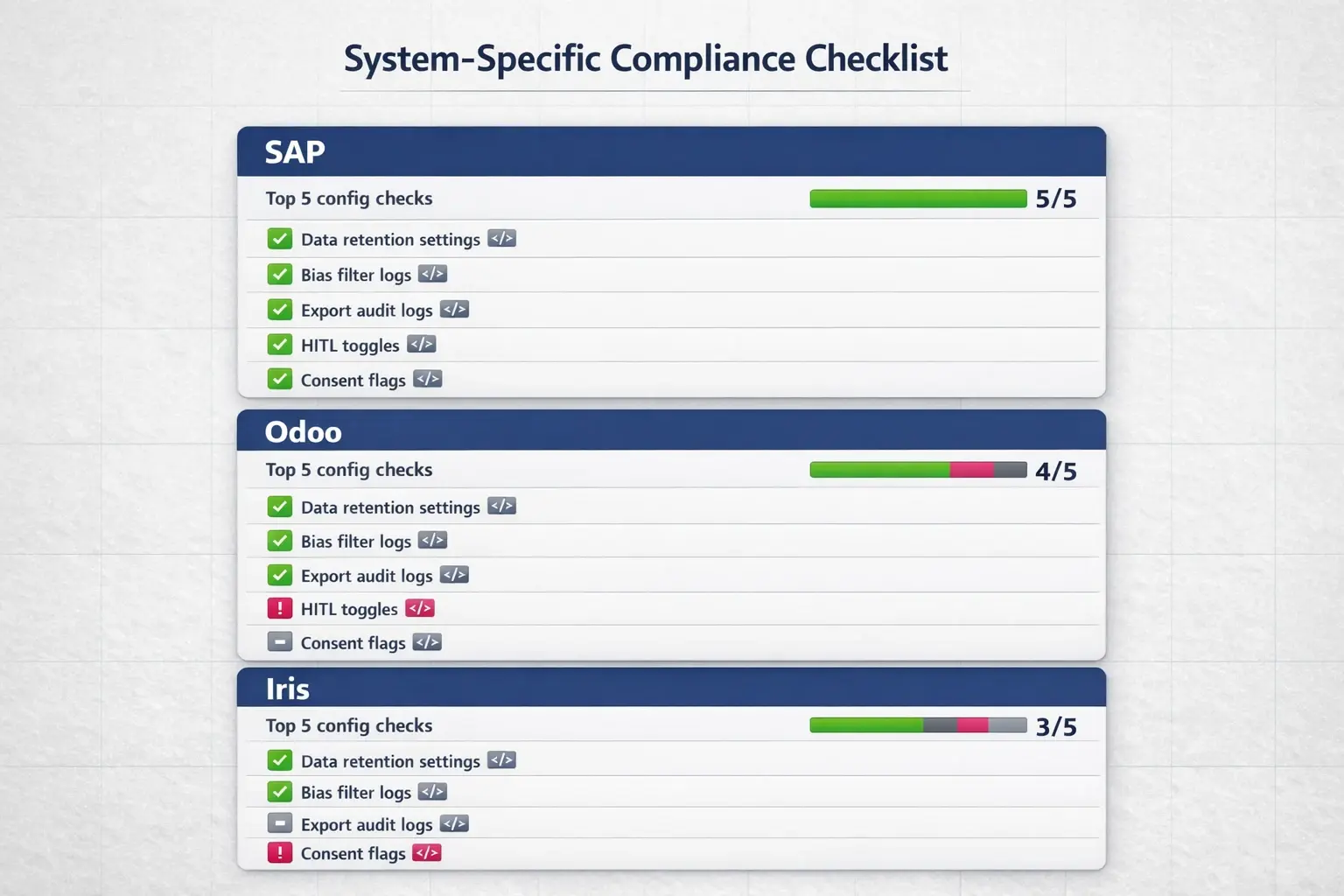

The final step is configuration. A policy document is useless if your HRIS isn’t set up to enforce it. Whether you are using SAP, Odoo, or Iris, you must verify how these systems handle data retention.

For instance, does your integration automatically purge candidate voice data after the legally mandated period? Compliance should be hard-coded into your tech stack.

Common Questions on Framework Implementation

Q: Does using Upfound AI remove the need for an internal DPO?No tool replaces internal governance. Upfound AI automates the heavy lifting of compliance—providing instant, unbiased scoring and secure data handling—but your DPO ensures these tools align with your specific company policies and local laws.

Q: How do we handle “Human-in-the-Loop” at scale?We recommend a “Tiered Review” policy. Allow AI to automate scheduling and initial screening, but mandate human validation for the top 20% of candidates and a random audit of the bottom 10% to ensure no qualified talent is slipping through cracks due to algorithmic error.

Q: Is our data used to train public models?Security-first platforms like Upfound AI isolate customer data. Ensure your internal policy explicitly prohibits entering PII (Personally Identifiable Information) into public, open-source AI tools.

Moving from Risk to Readiness

Building this framework isn’t just about avoiding fines; it’s about building a hiring engine that is predictable, fair, and scalable. By addressing these operational gaps now, you transform compliance from a bottleneck into a competitive advantage.